影韵 YING YUN

Exploring the Future of Traditional Art: Interactive Shadow Puppet

Art Installations

Integrating Kinect motion capture and parametric modeling technology with K-pop elements to create an innovative experience that fuses traditional shadow puppetry, modern digital interaction, and contemporary pop culture, breathing new life into cultural heritage.

Quick Facts

01

Core Concept

Merging shadow puppetry and K-pop to an creative installation uniting heritage and pop culture.

02

Key Tech

Kinect, Parametric Modeling, Digital Interaction.

03

Artistic Goal

Exploring tradition and modernity through cultural preservation and digital innovation.

04

Format

An immersive installation with real-time motion and interactive storytelling.

How Shadow Puppetry Gradually Declined

Traditional culture preservation is often confined to museums or studies, with limited public engagement and creative use of digital technology.

52.46% of people believe that foreign culture has a significant impact.

54.1% of people believe that shadow puppetry lacks innovation.

67.21% of people believe that the promotion of shadow puppetry is insufficient.

57.38% of people believe there is a lack of sustainable economic benefits.

Background

Revitalizing Tradition Through Modern Innovation

The Legacy of Chinese Shadow Puppetry

Chinese shadow puppetry, a UNESCO Intangible Cultural Heritage, is a traditional art form with over a thousand years of history. Combining storytelling, music, and craftsmanship, it once thrived as a cultural symbol, however.....

54.1%

Most young people said they had never seen a shadow puppet show before.

50.8%

More than half of the young people said that shadow puppetry is very likely to be lost.

The Global Influence of K-pop

K-pop has become a global cultural phenomenon, with a massive and devoted young audience in China.

By the end of 2023, China led the global Hallyu (Korean wave) fanbase, with 100.8 million active fans out of a total 225 million worldwide.

$25 Billion

According to the latest statement from Korea’s Ministry of Culture, Sports and Tourism, Hallyu exports are expected to grow at an annual rate of 12.3%, by 2027.

By examining the decline of shadow puppetry, the rise of K-pop, and digital technology's potential, this project bridges cultural heritage and modern innovation.

Problem to Solution

.jpg)

Rlated Work

Phase 1

Virtual Pottery: An Interactive Audio-Visual Installation

Combining gesture tracking technology and sound synthesis, this project integrates natural hand gestures with 3D shape and sound creation, offering an immersive audiovisual interactive experience.

Innovation 1:

Enables intuitive 3D shape and sound creation through natural gestures by OptiTrack, such as those used in pottery making.

Innovation 2:

Enhances the interaction between virtual and real environments by integrating motion capture technology with sound synthesis.

Phase 2

Exploring Visualizations in Real-time Motion Capture for Dance Education

Develops a whole-body interaction interface using real-time motion capture and 3D models to enhance dance learning, improvisation, and creativity through immersive visualizations and gamified experiences.

Innovation 1:

Uses inertial motion capture by Kinect to provide real-time feedback and interactive avatars for exploring movements and visual metaphors.

Innovation 2:

Merges motion visualizations (e.g., movement traces) with virtual objects, enabling creative and reflective dance learning.

Phase 3

Evaluating a Dancer's Performance By Kinect-Based Skeleton Tracking

Evaluates dance performances by comparing them to a gold-standard performance, providing real-time feedback and visualization in a 3D virtual environment using Kinect-based skeleton tracking.

Innovation 1:

Utilizes Kinect-based skeleton tracking combined with signal processing to automatically align and evaluate dance performances in real-time.

Innovation 2:

Provides visual feedback and evaluation scores in a 3D virtual environment, allowing users to analyze their movements from multiple perspectives.

System Architecture

key modules

The system can be divided into the following key modules:

Motion Capture Module

Captures real-time motion data from the performer using Kinect sensors.

Key Components:

-

Kinect sensor for skeletal tracking.

-

Data preprocessing pipeline to clean and filter raw motion data.

-

Skeleton extraction for mapping human movements.

Data Processing Module

Processes motion data to map human movements to the shadow puppet model.

Key Components:

-

Motion mapping algorithms to translate Kinect skeletal data to puppet movements.

-

Calibration tools to adjust for differences between human and puppet proportions.

-

Real-time motion smoothing to ensure fluid animation.

Visualization Module

Animates the shadow puppet and generates the visual output for performance.

Key Components:

-

3D rigged shadow puppet models.

-

Rendering engine for real-time animation.

-

Projection system for displaying animated performances.

Output Module

Handles the final projection of the animated shadow puppet performance.

Key Components:

-

Projector or screen for visual output.

-

Synchronization of audio (e.g., music or narration) with puppet movements.

-

Recording functionality for saving performances.

Flowchart Framework for the System

The flowchart will illustrate the interaction and data flow between the modules.

Start

End

Motion Capture

Data Processing

Output

Visualization

Interaction Study

Intro: Exploring Interaction through Motion and Modeling

This section outlines the Interaction Study’s goal: integrating Kinect motion capture with Grasshopper modeling to develop a shadow puppet system, exploring real-time motion tracking in interactive art and design.

1. Objective:

Use Kinect to capture human movements and output them in real time to a shadow-puppet character model, exploring the possibilities of interactive art.

2. Key Tools:

3. Goal:

Implement a human–computer interaction system that enables real-time synchronization between human movements and a virtual model.

Section 1: Setting Up Kinect for Motion Capture

Setting up Kinect to accurately track body movements and skeletal structures, focusing on reliable motion data capture.

1. Hardware Setup:

-

Connect the Kinect to the computer and install the required drivers.

-

Ensure the device functions properly and is compatible with the testing environment.

2. Motion Tracking:

-

Test the Kinect’s skeletal tracking function to capture major human joints and movements.

-

Verify that the device can recognize basic actions (e.g., gestures, raising hands, walking).

3. Challenges and Solutions:

-

Issue: The device connection was unstable, with occasional data loss.

-

Solution: Recalibrated the device position and optimized lighting conditions to improve tracking accuracy.

Section 2: Building the Shadow Puppet Model in Grasshopper

A shadow puppet model was created in Rhino using Grasshopper, structured to mimic human movement and replicate real-time motion data from Kinect.

1. Model Design:

-

Design a shadow-puppet character inspired by traditional shadow puppetry.

-

The model includes joints and movable parts to simulate human movements.

.jpg)

* The sketch depicts a modernized shadow-puppet design, combining traditional silhouette aesthetics with articulated joints for dynamic movement.

* The image shows the parametric modeling and basic color rendering of the modernized shadow puppet, highlighting its articulated structure and visual form.

.jpg)

* The image illustrates the finalized dimensions of the modernized shadow puppet and its physical prototype, produced via laser cutting.

2. Grasshopper Workflow:

-

Create a parametric model using Grasshopper, defining the geometry of the skeleton and joints.

-

Each joint of the model corresponds directly to Kinect’s skeletal data.

3. Key Features:

-

Flexible joint design supports complex movements.

-

The model can adjust in real time in response to input motion data.

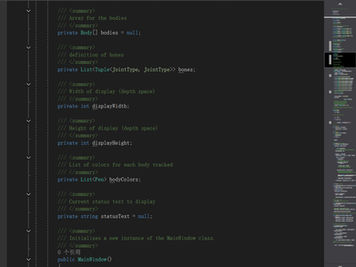

Section 3: Data Integration via Visual Studio

Integrated Kinect with Grasshopper via Visual Studio, processing motion data to enable real-time interaction with the shadow puppet model.

1. Data Flow:

-

After Kinect captures the human skeletal data, it is processed through Visual Studio.

-

The data is transmitted to Grasshopper to drive the shadow puppet model’s movements.

* The image illustrates how Visual Studio processes Kinect skeletal data, capturing joint movements for real-time use.

2. Technical Implementation:

-

Write code in Visual Studio to parse Kinect data (joint coordinates).

-

Use network protocols or plugins to transmit the data to Grasshopper in real time.

The images show the Visual Studio setup for sending Kinect skeletal motion data to Grasshopper via serial communication, including code for data explanation, definition, capture, and transmission.

The images show the Visual Studio setup for sending Kinect skeletal motion data to Grasshopper via serial communication, including code for data explanation, definition, capture, and transmission.

3. Challenges and Debugging:

-

Issue: Data transmission delay caused unsynchronized movements.

-

Solution: Optimize code logic to reduce transmission latency.

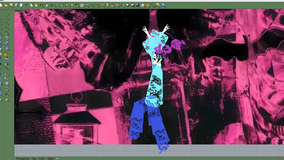

Section 4: Real-time Testing with a Kpop Dance

A Kpop dance segment was used to test the system’s real-time accuracy, responsiveness, and overall performance in capturing and replicating human movements.

1. Testing Setup:

-

Selected a Kpop dance segment (Jisoo’s Flower).

-

Kinect captured the dance movements and transmitted them in real time to the shadow puppet model.

2. Performance Evaluation:

-

Whether the skeletal movements are accurately captured.

-

Whether the Grasshopper model can smoothly reflect the input movements in real time.

-

Whether the latency is within an acceptable range.

3. Limitations:

-

Issue: Complex movements, especially when the shadow puppet is viewed from the side, may reduce recognition accuracy.

-

Improvement: Optimize algorithms to enhance the recognition and real-time performance of complex actions.

Hero Image: Final Showcase of the Shadow Puppet System

The final showcase demonstrates the integration of motion capture and real-time modeling through dynamic, interactive shadow puppet performances.

1. Key Features:

-

Real-time motion capture and output.

-

Highly accurate reproduction of human movement.

-

An innovative form of interactive artistic expression.

2. Visual Output:

-

Showcase the final dynamic performance of the virtual shadow puppet model.

Video

How we use Kinect to achieve real-time user's motion capture and bring shadow puppets to life by making them dance to K-pop.

Reflection and Future Work

YING YUN successfully combines traditional shadow puppetry with Kinect-based motion capture, bringing the art form into the modern era. By enabling real-time animation and performances like K-pop dances, we have made shadow puppetry more engaging and appealing to contemporary audiences while preserving its cultural essence.

Enhance Interactivity

Develop more user-friendly interfaces for performers and audiences.

Expand Motion Library

Add diverse movements and dance styles to enrich storytelling.

Build Online Platforms

Enable global audiences to experience and interact with shadow puppetry remotely.

These steps will help further modernize shadow puppetry while respecting its traditional roots.